Sitemaps are an easy way for webmasters to inform search engines about pages on their sites that are available for crawling. In its simplest form, a Sitemap is an XML file that lists URLs for a site along with additional metadata about each URL (when it was last updated, how often it usually changes, and how important it is, relative to other URLs in the site) so that search engines can more intelligently crawl the site.

Web crawlers usually discover pages from links within the site and from other sites. Sitemaps supplement this data to allow crawlers that support Sitemaps to pick up all URLs in the Sitemap and learn about those URLs using the associated metadata. Using the Sitemap protocol does not guarantee that web pages are included in search engines, but provides hints for web crawlers to do a better job of crawling your site.

This section is valid for both regular Blogger blogs (that use a .blogspot.com address) and also the self-hosted Blogger blogs that use a custom domain (like postsecret.com).

Here’s what you need to do to expose your blog’s complete site structure to search engines with the help of an XML sitemap.

- Open the Sitemap Generator and type the full address of your blogspot blog (or your self-hosted Blogger blog).

- Click the Create Sitemap button and this tool will instantly generate the necessary text for your sitemap. Copy the entire generated text to your clipboard (see screenshot below).

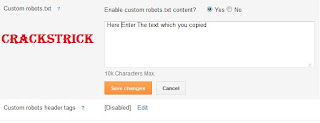

- Next go to your Blogger dashboard and under Settings – > Search Preferences, the enable Custom robots.txt option (available in the Crawling and Indexing section). Paste the clipboard text here and save your changes.

- And we are done. Search engines will automatically discover your XML sitemap files via the robots.txt file and you don’t have to ping them manually.

Submit your website or blog now for indexing in Google and over 300 other search engines!

ReplyDeleteOver 200,000 websites handled!

SUBMIT RIGHT NOW with I NEED HITS!